Discover HKBU

Delving into a new era of art technology powered by AI

27 Jul 2022

Throughout history, art has always worked hand in hand with technology to push new frontiers. From mining raw materials for sculptures to rendering computer graphics, technology has long provided artists with diverse tools for artistic creation and expression. As artificial intelligence (AI) has increasingly become part of our everyday lives, it has also presented endless opportunities for creative arts talent to explore novel art forms and reimagine what’s possible.

Last year, HKBU was awarded HK$52.8 million in research funding by the Theme-based Research Scheme (11th round) under the Research Grants Council for a five-year project. The pioneering project, entitled “Building Platform Technologies for Symbiotic Creativity in Hong Kong”, is led by Professor Guo Yike, Vice-President (Research and Development), and Professor Johnny Poon, Associate Vice-President (Interdisciplinary Research), is the deputy project coordinator.

Under this innovative art-tech project, the multidisciplinary research team led by HKBU is developing platform technologies for symbiotic creativity, providing unlimited art content for humans to usher in a new era of art technology. Initiatives associated with the project include an art data repository, an AI creative algorithm system, a research theatre, a digital art and policy network, and some unique and creative application projects.

A groundbreaking collaboration between humans and machines

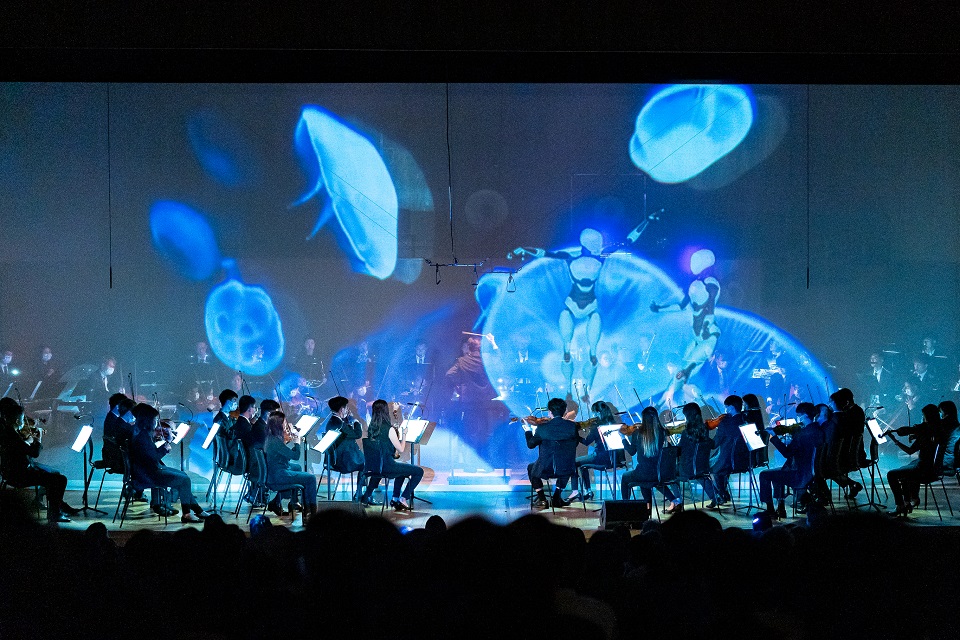

This month, the University presented a significant outcome of the research project at the Hong Kong Baptist University Symphony Orchestra (HKBU Symphony Orchestra) Annual Gala Concert, where an innovative performance showcasing human creativity alongside AI premiered in the Hong Kong City Hall.

Dubbed “A Lovers’ Reunion”, the Concert echoed the celebration of the 25th anniversary of Hong Kong’s reunification with the motherland, and it attracted about 800 people, including Mr Kevin Yeung Yun-hung, Secretary for Culture, Sports and Tourism, and other distinguished guests. The innovative performance was the first human-machine collaboration of its kind in the world and showcased how AI can be a creative force that can perform music, create cross-media art and dance.

The AI technologies developed for the performances were provided by researchers from the Augmented Creativity Lab, which is led by Professor Guo. The team comprised Professor Johnny Poon; as well as Dr Liu Qifeng, Associate Professor of Practice, Dr Xue Wei, Assistant Professor, and Dr Chen Jie, Assistant Professor of the Department of Computer Science.

Professor Guo says, “The first human-machine collaborative performance of its kind in the world presented by HKBU at the Gala Concert is an important outcome of the ‘Building Platform Technologies for Symbiotic Creativity in Hong Kong’ research project. It is also a milestone of AI research revealing the unlimited potential of human-machine symbiotic creativity.”

Computer as a virtual choir

In a highly expressive performance, the HKBU Symphony Orchestra shared the stage with an AI virtual choir to perform a newly arranged choral-orchestral version of the song Pearl of the Orient. It marked the first time in the world that an AI choir had combined with a machine-generated visual storyteller to perform interactively with a conductor and an orchestra.

The AI choir comprised the voices of 320 virtual performers, but the tool was trained on the audio recordings of just a few professional singers. Dr Xue Wei says, “The singers were not asked to sing Pearl of the Orient specifically, and we also recorded their speaking voice.” Based on the data collected from human singing and speaking, Dr Xue and his team extracted key features of vocal singing, and they developed generative models of the singers’ voices. As the algorithmic training progressed, the researchers provided additional data to improve the AI singers’ pitch, melody and expressiveness and enable the tool to express emotions artistically in accordance with the music. Apart from sharing the same timbre as the training data, the “interpolated singers”, who form a four-part virtual choir, were also generated as virtual performers with new timbres of their own.

Visual storytelling by an AI media artist

The researchers also trained an AI artist to learn the music and lyrics of the choral piece. It can associate the underlying meaning of the lyrics with an appreciation of the beauty of Hong Kong, and it used this information to create a cross-media visual narrative which portrayed its aesthetic imagination of the song. “The dynamic visuals are generated according to the lyrical content, and the vantage point of the visuals also changes in keeping with the melody,” says Dr Liu Qifeng, who was involved in the development of the learning algorithm for training the AI artist. “The higher the pitch, the higher the vantage point. When the tempo of the song increases, the speed of the visual content also picks up. The content generated by the AI artist is driven by the elements of the musical piece.”

According to Dr Liu, the machine-powered media artist model relies on using textual lyrics as the sole input, while conventional AI style transfer techniques often import images as a reference for the algorithms to mimic. The vivid artwork generated by the AI artist not only showcased the machine’s vision of Hong Kong as the Pearl of the Orient, but also informed the audience of how visual storytelling has evolved in the age of AI.

Dance sequences devised by algorithms

Another highlight of the Concert was a ballet performance featuring AI virtual dancers in Ravel’s Daphnis et Chloé, accompanied live by the HKBU Symphony Orchestra. The ideas for the choreography came from the natural world, which provided dance movements inspired by a newly discovered species of box jellyfish in Hong Kong.

In collaboration with the Hong Kong Dance Company, Dr Chen Jie and his team employed motion capture techniques and collected movement data from the professional dancers who performed to the music. The scientists then trained the AI choreographic tool to learn the underlying emotional and aesthetic connections between music and dance. “The dancers’ movements are defined by how they interpret the music. The AI algorithms have to parse their movements so that they can learn how the dancers interpret the music and dance expressively,” says Dr Chen.

The team also extracted and embedded choreomusical rules, such as beat alignment, transition and combination of dance sequences, into the generative models. The machine has been trained to identify patterns in natural scenery, and it used these patterns to choreograph the ballet. The generated dance was performed by virtual dancers in a natural and artistic way based on the machine’s own understanding of the music.

Opening up new avenues in the world of arts

Besides providing the audience with an immersive cross-media performance, the Concert also spotlighted the artistic prowess of HKBU’s award-winning student musicians in the performances of Saint-Saëns’s Introduction and Rondo Capriccioso in A minor, Op. 28; Borne’s Fantaisie brillante sur ‘Carmen’; and Lauryn Kurniawan’s Rasa for string quartet and gamelan.

Professor Johnny Poon, who was also the music director and conductor of the HKBU Symphony Orchestra and the Collegium Musicum Hong Kong, says, “In addition to celebrating HKBU’s young musicians, the innovative concert showcased how the University is using technology to push the envelope of human imagination in the arts and cultural sphere. Our art-tech research also enables musicians and artists to go beyond the traditional forms and interact with the audience in brand new ways.”

Looking beyond the artistic applications featured in the Concert, the HKBU-led research team is continuing to develop innovative technologies that can foster a new direction in art created by both humans and machines.

Professor Guo Yike says, “HKBU is dedicated to building a world-class AI art-tech platform that will drive a new revolution that transforms the creative and cultural industries. It will enable Hong Kong to assume a leading position in art-tech on the global stage.”