Discover HKBU

From early cinema to AI-powered artistic experience

31 Jul 2023

In 1922, the director Dudley Murphy created the silent movie Danse Macabre, envisioning a colourful horror film that synchronised with the tone poem of the same name composed by Camille Saint-Saëns in 1874. However, limited by the technology at the time, the movie employed a technique called colour tinting and toning, which resulted in entire scenes being coloured in a particular shade.

Little did Murphy know that his original vision for the film would come to life some 100 years after its premiere.

Last month, audiences at the Hong Kong Baptist University Symphony Orchestra (HKBU Symphony Orchestra) Annual Gala Concert were captivated by the screening of a restored version of Murphy’s film in full colour, while the live orchestra performed Saint-Saëns’ piece synchronously.

The innovative performance marked a multidisciplinary research collaboration between the University’s artists and scientists. “Music and visual media have always been closely intertwined. Our concert brought to the stage a cross-disciplinary and cross-media artistic performance by integrating music and a classical silent film, which was restored and enhanced through the use of artificial intelligence technologies,” says Professor Johnny Poon, Associate Vice-President (Interdisciplinary Research) and Dean of the School of Creative Arts, who is also the Music Director and Conductor of the HKBU Symphony Orchestra and the Collegium Musicum Hong Kong.

How AI revives the early cinema

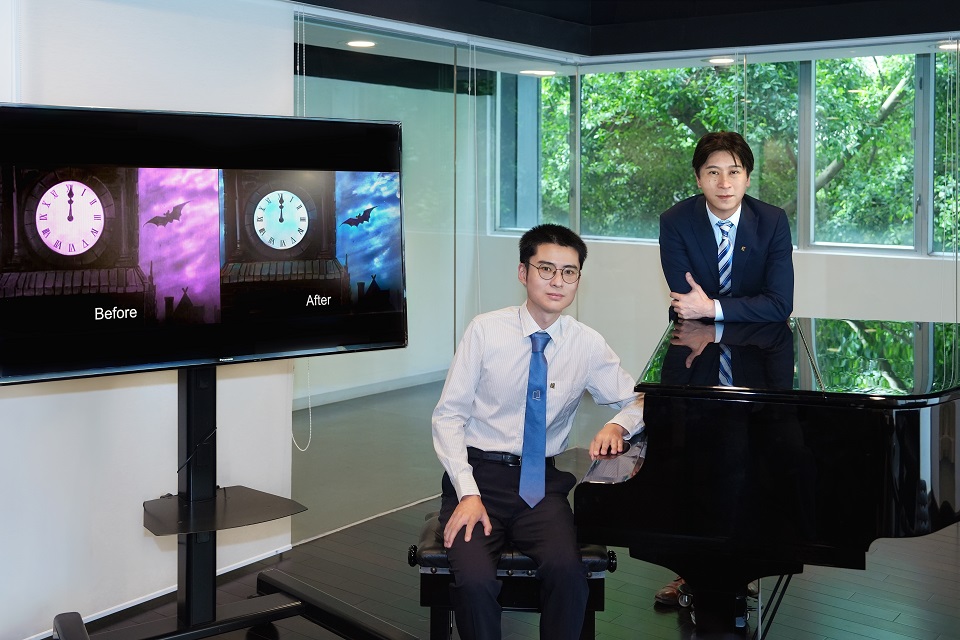

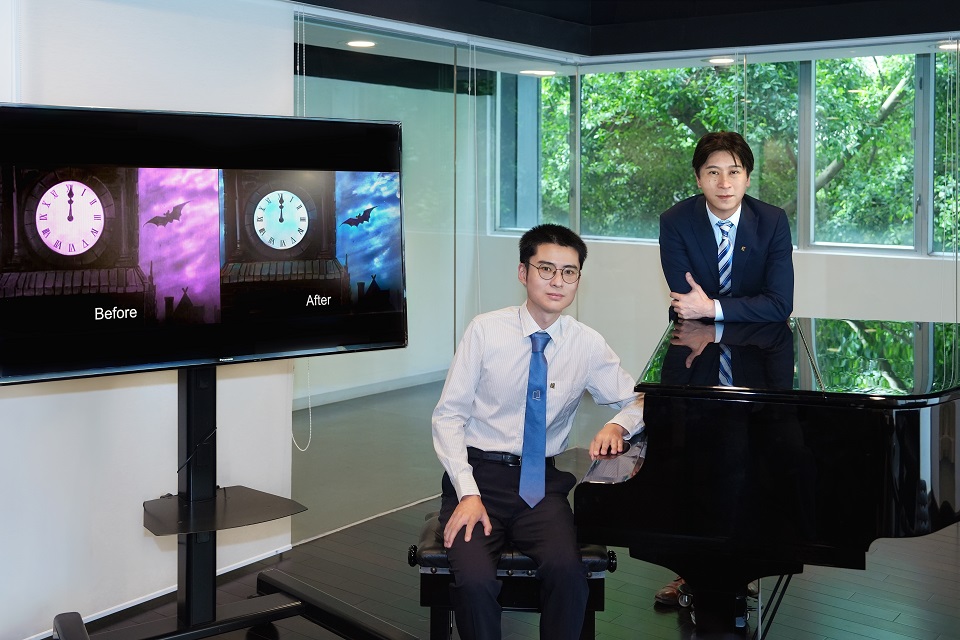

Dr Wan Renjie, Assistant Professor of the Department of Computer Science, was involved in the restoration and colourisation of the silent film produced over a century ago. “The quality of the film deteriorated over time, and there was a lot of noise in the visual data, which greatly affected the viewing experience. To restore the film, our team used AI technologies to remove video noise, reduce flickering and increase the resolution of the film,” he says.

Another important aspect of the restoration process was adding realistic colours to the monochrome film. To achieve this, the team trained machine learning algorithms to colourise low-quality monochrome video frames. “The techniques in image colourisation for static photos are well developed, but the challenge in colourising movies is that they contain a series of images to create a persistence of motion. Therefore, when colourising videos, we have to consider the relationship between each frame to ensure colour consistency,” says Dr Wan.

The team also employed a technique named Neural Radiance Fields that can generate 3D representations of a scene based on static images. “From the images, we can extract the necessary features for colourisation and construct a colourised video sequence,” says Dr Wan.

Professor Poon believes the technologies that powered the novel audio-visual experience at the concert can provide contemporary audiences with more opportunities to appreciate early cinema. “These technologies not only restore damaged silent films, but they also make early cinema relevant and accessible, allowing more people to better understand the cultural values and human experiences of the past,” he says.

Opening new avenues for knowledge

Supported by the Blue Sky Research Fund established by the University, Dr Wan is leading a two-year research project to rejuvenate classical silent films using AI tools. Besides the innovative technologies unveiled at the concert, the research team is developing generative AI models to enhance the colour palette and stability of silent films.

The team’s next step is to deploy generative models that can create new content to fill the narrative gaps in silent films resulting from the loss of video frames due to deterioration. According to Dr Wan, the team will use advanced natural language processing and machine learning algorithms to create video content from text, while also ensuring the consistency of coloration and video quality.

Dr Wan says: “Images and videos are crucial media that we use to perceive the world. Therefore, improving the quality of visual media has always been one of our goals in developing image and video restoration techniques. We can also apply these technologies to enhance the photo and video quality of smartphone cameras.”

Looking ahead, Professor Poon believes that the technologies being developed by the research team have great potential for preserving classical films for future generations and opening new avenues for knowledge and discovery. “AI technologies and applications have unlocked new opportunities for restoring and preserving history and cultural heritage. In the future, we can use these technologies to reconstruct urban scenes of the past. This year’s concert showcased how transdisciplinary research at HKBU inspires imagination and advances knowledge discovery,” he says.